Log Shipping

When enabled, the Log Shipping feature ships copies of the selected log type(s) to a configured cloud storage bucket (e.g. Amazon Web Services (AWS) S3 buckets, Google Cloud Storage buckets, Azure Blob Storage). Shipped logs are JSON-formatted and batched by up to 50 MB or at 5-minute intervals. The shipped logs can then be stored, processed, or analyzed. Logs are an important asset for understanding the use of an application’s system, connectivity issues, performance tuning, usage patterns, and in analyzing service interruptions.

Files from more than one of an organization’s applications and environments can be shipped to a single cloud storage bucket.

Access

Prerequisites

- To configure and enable Log Shipping, a user must have at minimum an App admin role for that application or an Org admin role.

- The configuration steps for Log Shipping require the user to have access to the cloud storage provider’s setting values.

- An object store that meets all cloud storage bucket requirements has been created and is ready to receive shipped files.

In the VIP Dashboard for an application:

- Select an environment from the environment dropdown located at the upper left of the VIP Dashboard.

- Select “Logs” from the sidebar navigation at the left of the screen.

- Select “Log Shipping” from the submenu.

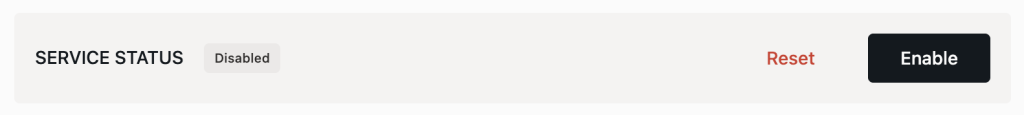

In the upper area of the “Log Shipping” panel, the box titled “Service Status” indicates if the Log Shipping feature for the environment is currently “Enabled”, “Disabled”, or “Awaiting configuration”.

Settings for Log Shipping can be updated at any time. If Log Shipping is currently enabled, it must be disabled before the setting options can be accessed. Select the button labeled “Disable“, then update the settings as needed.

Shipment Contents

The Log Shipping feature can ship one or more types of logs for an environment. In the section titled “Shipping Contents”:

- Select one or more types of logs to be shipped to a cloud storage container on a regular cadence. Options for log types include:

- HTTP request edge logs

- HTTP request origin logs

- Application logs

- Batch logs (WordPress only)

- Slow query logs (WordPress only)

- Select the button labeled “Continue” to save the selection(s) and enter the first step of Cloud Configuration.

Cloud Configuration

In addition to completing a selection of log types in the section titled “Shipping Contents”, all steps in the section titled “Cloud Configuration” must be completed before Log Shipping can be enabled.

Select Provider

- Select a cloud storage provider from the dropdown options (e.g. “AWS S3”, “Google Cloud Storage”, or “Azure Blob Storage”).

- Select the button labeled “Continue“.

Prerequisites

To complete the configuration of an AWS S3 bucket, a user must have sufficient access permissions on AWS to:

- Modify the AWS bucket policy of the AWS S3 bucket.

- Create an AWS CloudFormation stack in the AWS account.

Configure Cloud Connection

- Configure the AWS S3 bucket requires by adding valid entries for the required fields: “AWS Account ID” (How to find your AWS Account ID), “Bucket Name”, and “Bucket Region”. Entering a unique value for the “S3 Prefix” field is optional but useful if logs from more than one environment in the organization are shipped to the same cloud storage bucket.

- Select the button labeled “Continue“.

Test Configuration

Based on the values entered in “Step 2 of 3: Configure”, a CloudFormation Template will populate the field labeled “Generated CloudFormation Template“. To continue the process of enabling Log Shipping:

- Select the button labeled “Download Template” to download the CloudFormation Template JSON file.

- Follow the instructions for Creating a stack on the AWS CloudFormation console to use the CloudFormation Template JSON file to create a stack in AWS CloudFormation.

- Select the button labeled “Run Test“ to test the configuration of the S3 bucket. A test file named

wpvip-test-connectionwill be uploaded to the S3 bucket as part of the verification process. This file will always be present in a site’s configured S3 bucket and path, alongside the dated folders that contain the logs themselves.

Prerequisites

To complete the configuration of a Google Cloud bucket, a user must have a Google Cloud Platform Service account with sufficient permissions to:

- Create and configure a Google Cloud bucket.

- Generate and download an authentication key as a JSON file.

Configure Cloud Connection

- Configure the Google Cloud bucket by adding valid entries for the required fields: “GCP Bucket Name” and “GCP Credentials JSON“. Entering a unique value for the “GCP Prefix” field is optional but useful if logs from more than one environment in the organization are shipped to the same cloud storage bucket.

- Select the button labeled “Continue“.

Test Configuration

Select the button labeled “Run Test“ to test the configuration of the cloud storage bucket.

A test file named wpvip-test-connection-[YYYY-MM-DD-HH:mm:ss] will be uploaded to the cloud storage bucket as part of the verification process. This file will always be present in an environment’s configured cloud storage bucket and path, alongside the dated folders that contain the logs themselves.

Prerequisites

To complete the configuration of an Azure Blob Cloud bucket, a user must have:

- Access to an Azure subscription with a storage account.

- At minimum a Storage Blob Data Contributor role to have sufficient permissions to create and configure a storage account, a container, and a SAS token.

- The steps to create the necessary container and SAS token require the user to either have Azure CLI installed and authenticated (for CLI method) or Azure Portal access with sufficient privileges (for Portal method).

Configure Cloud Connection

- Configure the Azure Blob bucket by adding valid entries for the required fields: “

- Azure Storage Account Name: Enter the name of the relevant Azure storage account.

- Azure Container Name: Create a container in Azure and paste the name of the container in this field. If a firewall is needed to restrict access to only IPs within the IP range of the VIP Platform, create the container with a dedicated storage account.

- Azure Prefix: Entering a unique value in this field is optional but useful if logs from more than one environment in the organization are shipped to the same cloud storage bucket.

- Azure SAS Token: Create a Shared Access Signature (SAS) token and paste the value of the token in this field. A stored access policy can be optionally defined to provide an additional level of control over permissions for the SAS token and its time of expiry.

- Select the button labeled “Continue“.

Test Configuration

Select the button labeled “Run Test“ to test the configuration of the cloud storage bucket.

A test file named wpvip-test-connection-[YYYY-MM-DD-HH:mm:ss] will be uploaded to the cloud storage bucket as part of the verification process. This file will always be present in an environment’s configured cloud storage bucket and path, alongside the dated folders that contain the logs themselves.

Next, Log Shipping must be enabled to begin shipping the selected logs to the configured bucket.

Enable Log Shipping

After completing the required Cloud Configuration settings, the Log Shipping feature must be enabled in order for file shipping to the configured cloud storage bucket to begin.

In the upper area of the Log Shipping panel:

- Select the button labeled “Enable“.

Disable Log Shipping

- Navigate to the VIP Dashboard for an application.

- Select an environment from the environment dropdown located at the upper left of the VIP Dashboard.

- Select “Logs” from the sidebar navigation at the left of the screen.

- Select “Log Shipping” from the submenu.

- Select the button labeled “Disable” in the upper area of the Log Shipping panel.

Path structure of shipped logs

Each log type that is configured and enabled to ship to a cloud storage bucket will be delivered within a path structure that follows the pattern: ${bucket_name}/${optional_prefix}/${log_type}/YYYY/MM/dd/...

For example, the path structure for slow query logs shipped to a bucket named “example-bucket” with the prefix “example-prefix” would be: example-bucket/example-prefix/slow_query/2026/03/07/...

Legacy path structure

The legacy version of Log Shipping only shipped HTTP Request Edge Logs (previously named “HTTP request Log Shipping”). The delivery path for those logs followed the pattern: ${bucket_name}/${optional_prefix}/YYYY/MM/dd/____

Logs will continue to ship to that legacy path structure until any configuration updates are made in the Log Shipping panel (e.g. switching from an S3 bucket to Azure Blob Storage or opting in to ship application logs as well). After updating Log Shipping configurations, HTTP Request Edge Logs will be delivered to the updated path structure: ${bucket_name}/${optional_prefix}/http_requests_edge/YYYY/MM/dd/...

Last updated: March 12, 2026